»Die Privatsphäre kommt nicht über Nacht zurück«

Mit Styling-Tipps und Anti-Drohnen-Mode geht er gegen Überwachung vor – Adam Harvey will Spaß in die unangenehme Debatte um Datenschutz und Privatsphäre bringen. Warum das wichtig ist und wie es weitergehen kann, erklärt er uns im Interview. – Dieser Text ist auf Deutsch und Englisch verfügbar.

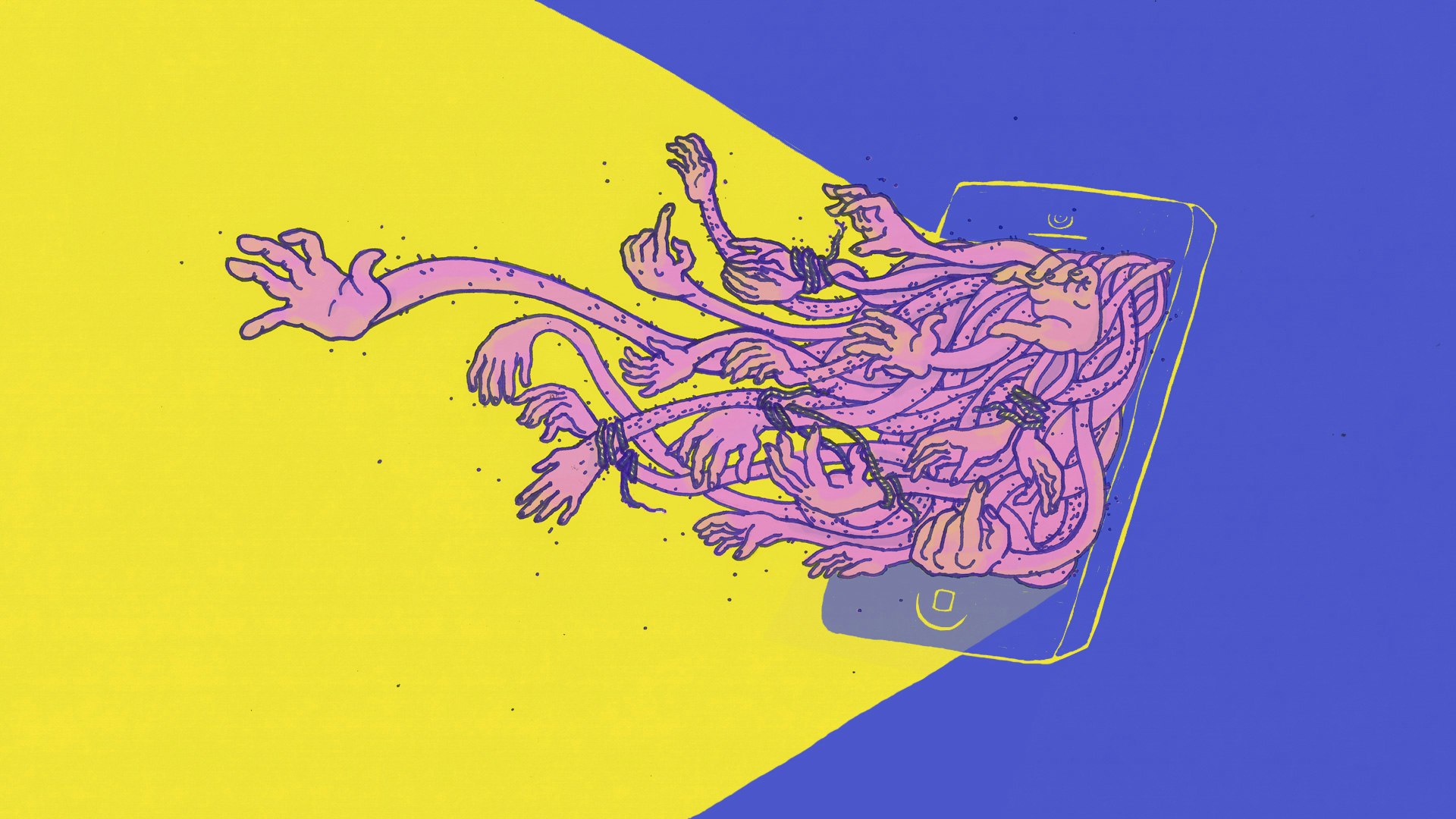

Have you ever thought about choosing special make-up to hide from video surveillance? Probably not – but it works! Adam Harvey is an artist and engineer working on creative ways to adapt to global mass surveillance. Besides

5 months ago he moved from New York to Berlin. Now he speaks with me about arts, surveillance and democracy.

Mit Illustrationen von Ronja Schweer für Perspective Daily